RDP is more efficient and has better performing than VNC and probably is more secure.

Installation

The adventage of x11vnc is that you have the same desktop that is open in your workstation and two persons can share the screen. The cons is that you need to have a logged user in the Xs before using it. If you restart the

machine then you need to do some tricks to us x11vnc as root to be able

to go to the splash window.

XRDP is better but by default you open different sessions each time and is not trivial how to get the current session active in your workstation.

Anyway, if you use Centos7 you will have probles usig XRDP because there are some issues with selenux (the security system for applications in redhat)

XRDP can be installed from EPEL repo (the essential extra packages).

# install EPEL repo

$ sudo yum install epel-release

$ sudo yum update

# install XRDP

$ sudo yum install xrdp tigervnc-server

$ sudo service xrdp start

$ sudo service xrdp-sesman start

After installing, activate the service

$ sudo systemctl enable xrdp.service

Confirm that XRDP is runing

# netstat -antup | grep xrdp

tcp 0 0 0.0.0.0:3389 0.0.0.0:* LISTEN 1508/xrdp

tcp 0 0 127.0.0.1:3350 0.0.0.0:* LISTEN 1507/xrdp-sesman

But the job is not finished. By default XRDP listen to 3389 but this service is not allowed to listen to this port by default. You need to fix security and firewall to allow XRDP listen to 3389 (selenux) and allow external connections to 2289 (firewall)

# allow selemux (use one of the two options. Option 2 is prefered)

## option 1

$ sudo chcon --type=bin_t /usr/sbin/xrdp

$ sudo chcon --type=bin_t /usr/sbin/xrdp-sesman

## option 2

$ sudo semanage fcontext -a -t bin_t /usr/sbin/xrdp

$ sudo semanage fcontext -a -t bin_t /usr/sbin/xrdp-sesman

$ sudo restorecon -v /usr/sbin/xrdp*

# using chcon updates the SELinux context temporarily until the next relabelling.

# A permanent method is to use semanage and restorecon

# allow firewall for external connections (just in case is not open by default)

$ sudo firewall-cmd --permanent --zone=public --add-port=3389/tcp

$ sudo firewall-cmd --reload

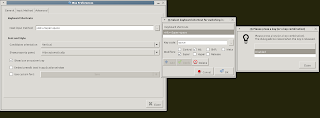

Configuration

The config file is in

/etc/xrdp/xrdp.ini

The port by default is 3389 and you can change here:

[globals]

# xrdp.ini file version number

ini_version=1

bitmap_cache=yes

bitmap_compression=yes

port=3389

And the setting for reconnecting to the previous session is in section "[XRDP1]" for port attribute. Set to ask-1

[xrdp1]

name=sesman-Xvnc

lib=libvnc.so

username=ask

password=ask

ip=127.0.0.1

port=ask-1

delay_ms=2000

ask would ask you for a port in the log in. If no port given, with the

-1 a new one is assigned

If you can remember the current port for your session see next section

How to find the current XRDP session port

You could login a ssh session and find out the number by

netstat -tulpn | grep vnc

and you will get something like the following

tcp 0 0 127.0.0.1:5910 0.0.0.0:* LISTEN 5365/Xvnc

and then you know 5910 was the port you connected to.

More infor about XRDP and X11vnc and vnc links can be found at http://c-nergy.be/blog/?p=6063